后端实现记录+总结 任务:

架构图

数据处理

数据库交互

大数据平台交互(后端与HDFS和Hive进行交互)

接口设计与实现

Ajax请求处理

安全性-身份验证

用例测试

1.创建项目,配置文件 1 2 3 # port8080

Spring启动文件—Main.java

1 2 3 4 5 6 7 8 9 10 11 12 13 package hokdata;import org.springframework.boot.SpringApplication;import org.springframework.boot.autoconfigure.SpringBootApplication;import org.springframework.boot.web.servlet.ServletComponentScan;@SpringBootApplication @ServletComponentScan public class Main {public static void main (String[] args) {

2.创建数据库,配置MySQL连接 建立数据库 1 2 3 4 5 6 7 8 9 10 CREATE DATABASE hok;

创建相应的实体类 Heros 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 package hokdata.pojo;import lombok.AllArgsConstructor;import lombok.Data;import lombok.NoArgsConstructor;@Data @AllArgsConstructor @NoArgsConstructor public class Heros {private Integer id;private String name;private String role;private String type;private Integer difficulty;

添加测试数据 1 2 3 4 5 6 7 8 9 10 11 12 13 14 USE hok;

MySQL的配置 配置文件 1 2 3 4 5 6 datasource.dbs.mysql.type =com.alibaba.druid.pool.DruidDataSource datasource.dbs.mysql.driver-class-name =com.mysql.cj.jdbc.Driver datasource.dbs.mysql.url =jdbc:mysql://localhost:3306/hok?useUnicode=true&characterEncoding=utf8 datasource.dbs.mysql.username =root datasource.dbs.mysql.password =123456

数据源的配置 配置文件 MySQLConf 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 package hokdata.conf;import com.alibaba.druid.pool.DruidDataSource;import org.apache.ibatis.session.SqlSessionFactory;import org.mybatis.spring.SqlSessionFactoryBean;import org.mybatis.spring.SqlSessionTemplate;import org.mybatis.spring.annotation.MapperScan;import org.springframework.beans.factory.annotation.Autowired;import org.springframework.beans.factory.annotation.Qualifier;import org.springframework.boot.context.properties.EnableConfigurationProperties;import org.springframework.context.annotation.Bean;import org.springframework.context.annotation.Configuration;import javax.sql.DataSource;@Configuration @MapperScan(basePackages = "hokdata.dao.mysql", sqlSessionFactoryRef = "mysqlSqlSessionFactory") @EnableConfigurationProperties(DataSourceProperties.class) public class MySQLConf {@Autowired private DataSourceProperties dataSourceProperties;@Bean("mysqlDataSource") public DataSource getMySQLDataSource () {DruidDataSource dataSource = new DruidDataSource ();"driver-class-name" ));"url" ));"username" ));"password" ));return dataSource;@Bean("mysqlSqlSessionFactory") public SqlSessionFactory getMySQLFactory (@Qualifier("mysqlDataSource") DataSource dataSource) throws Exception {SqlSessionFactoryBean bean = new SqlSessionFactoryBean ();return bean.getObject();@Bean("mysqlSqlSession") public SqlSessionTemplate mysqlSession (@Qualifier("mysqlSqlSessionFactory") SqlSessionFactory factory) {SqlSessionTemplate template = new SqlSessionTemplate (factory);return template;

配置文件 DataSourceProperties 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 package hokdata.conf;import lombok.Data;import org.springframework.boot.context.properties.ConfigurationProperties;import java.util.Map;@Data @ConfigurationProperties(prefix = DataSourceProperties.DS,ignoreUnknownFields = false) public class DataSourceProperties {static final String DS = "datasource.dbs" ;private Map<String, String> mysql;

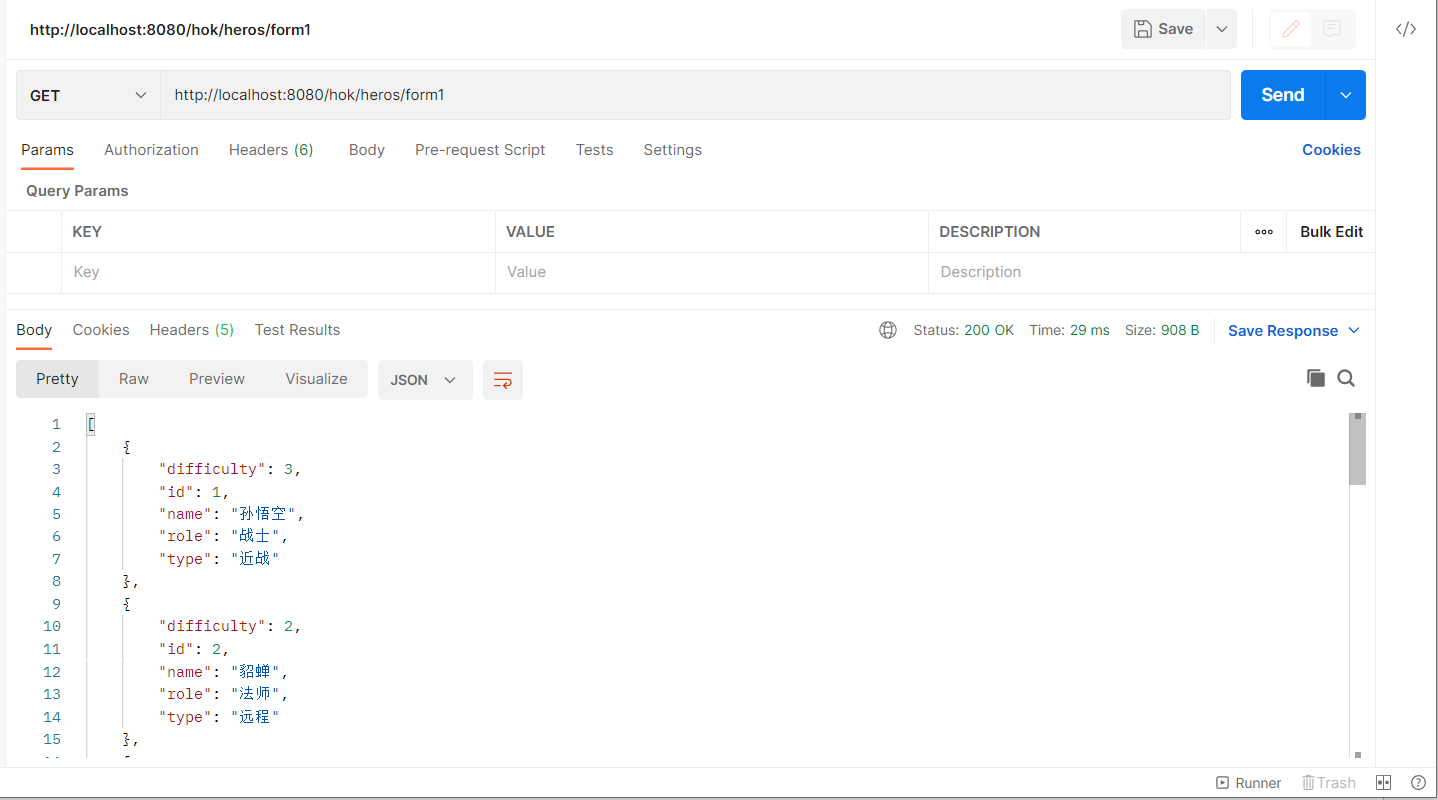

3.分层架构 | 实现一个简单的数据查询,测试连接 Dao层(数据访问层) 1 2 3 4 5 6 7 8 9 10 11 12 13 package hokdata.dao.mysql;import hokdata.pojo.Heros;import org.apache.ibatis.annotations.Mapper;import org.apache.ibatis.annotations.Select;import java.util.List;@Mapper public interface HerosDao {@Select("SELECT * from heros") list () ;

Service层(业务逻辑层) 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 package hokdata.service;import hokdata.dao.mysql.HerosDao;import hokdata.pojo.Heros;import org.springframework.beans.factory.annotation.Autowired;import org.springframework.stereotype.Service;import java.util.List;@Service public class HerosService {@Autowired private HerosDao herosDao;public List<Heros> getHeros () {return herosDao.listHeros();

Web层 (表示层) 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 package hokdata.web;import com.alibaba.fastjson.JSON;import hokdata.pojo.Heros;import hokdata.service.HerosService;import org.springframework.beans.factory.annotation.Autowired;import org.springframework.web.bind.annotation.RequestMapping;import org.springframework.web.bind.annotation.RestController;import java.util.List;@RestController @RequestMapping("/heros") public class HerosWeb {@Autowired private HerosService herosService;@RequestMapping("/form1") public String listHeros () {String heroStr = JSON.toJSONString(herosList);return heroStr;@RequestMapping("/form2") public String listHeros1 () {return "1" ;

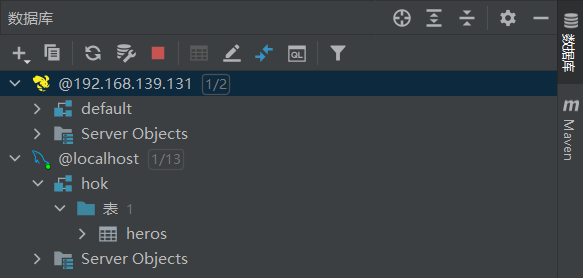

结果测试 4.Hive配置和测试 1. IDEA和数据库的连接 2. 配置文件 DataSourceProperties 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 package hokdata.conf;import lombok.Data;import org.springframework.boot.context.properties.ConfigurationProperties;import java.util.Map;@Data @ConfigurationProperties(prefix = DataSourceProperties.DS,ignoreUnknownFields = false) public class DataSourceProperties {static final String DS = "datasource.dbs" ;private Map<String, String> mysql;private Map<String, String> hive;

HDFSConf 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 package hokdata.conf;import org.apache.hadoop.fs.FileSystem;import org.springframework.beans.factory.annotation.Value;import org.springframework.context.annotation.Bean;import org.springframework.context.annotation.Configuration;import java.io.IOException;import java.net.URI;import java.net.URISyntaxException;@Configuration public class HDFSconf {@Value("${hdfs.replication}") private String replication;@Value("${hdfs.user}") private String user;@Value("${hdfs.uri}") private String uri;public org.apache.hadoop.conf.Configuration getConf () {Configuration conf = new org .apache.hadoop.conf.Configuration();"dfs.replication" ,replication);return conf;@Bean public FileSystem getFS () {FileSystem fs = null ;try {new URI (uri),getConf(),user);catch (URISyntaxException e) {catch (IOException e) {catch (InterruptedException e) {return fs;

HiveConf 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 package hokdata.conf;import com.alibaba.druid.pool.DruidDataSource;import org.apache.ibatis.session.SqlSessionFactory;import org.mybatis.spring.SqlSessionFactoryBean;import org.mybatis.spring.SqlSessionTemplate;import org.mybatis.spring.annotation.MapperScan;import org.springframework.beans.factory.annotation.Autowired;import org.springframework.beans.factory.annotation.Qualifier;import org.springframework.boot.context.properties.EnableConfigurationProperties;import org.springframework.context.annotation.Bean;import org.springframework.context.annotation.Configuration;import javax.sql.DataSource;@Configuration @MapperScan(basePackages = "hokdata.dao.hive", sqlSessionFactoryRef = "hiveSqlSessionFactory") @EnableConfigurationProperties(DataSourceProperties.class) public class HiveConf {@Autowired private DataSourceProperties dataSourceProperties;@Bean("hiveDataSource") public DataSource getHiveDataSource () {new DruidDataSource ();"driver-class-name" ));"url" ));"username" ));"password" ));return dataSource;@Bean("hiveSqlSessionFactory") public SqlSessionFactory getHiveFactory (@Qualifier("hiveDataSource") DataSource dataSource) throws Exception {SqlSessionFactoryBean bean = new SqlSessionFactoryBean ();return bean.getObject();@Bean("hiveSqlSession") public SqlSessionTemplate hiveSession (@Qualifier("hiveSqlSessionFactory") SqlSessionFactory factory) {SqlSessionTemplate template = new SqlSessionTemplate (factory);return template;

3. 实体类HeroScore 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 package hokdata.pojo;import lombok.AllArgsConstructor;import lombok.Data;import lombok.NoArgsConstructor;@Data @AllArgsConstructor @NoArgsConstructor public class HeroScore {private Integer id;private String name;private String areaS;private String scoreS;

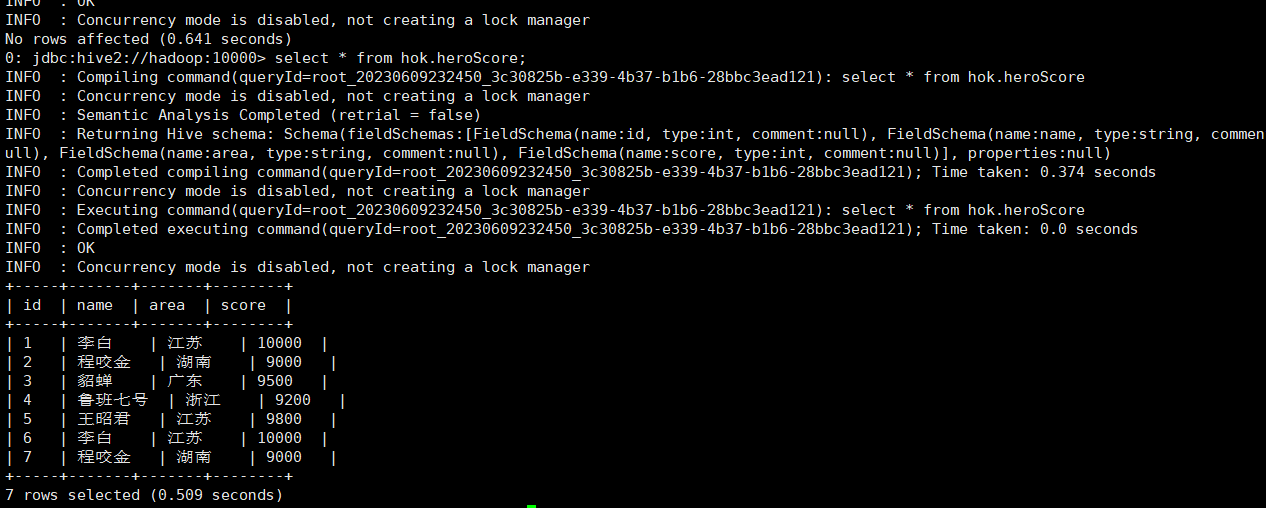

4. Dao层 1 2 3 4 5 6 7 8 9 10 11 12 13 14 package hokdata.dao.hive;import hokdata.pojo.HeroScore;import org.apache.ibatis.annotations.Mapper;import org.apache.ibatis.annotations.Select;import java.util.List;@Mapper public interface HeroScoreDao {@Select("SELECT * from heroScore") listHeroScore () ;

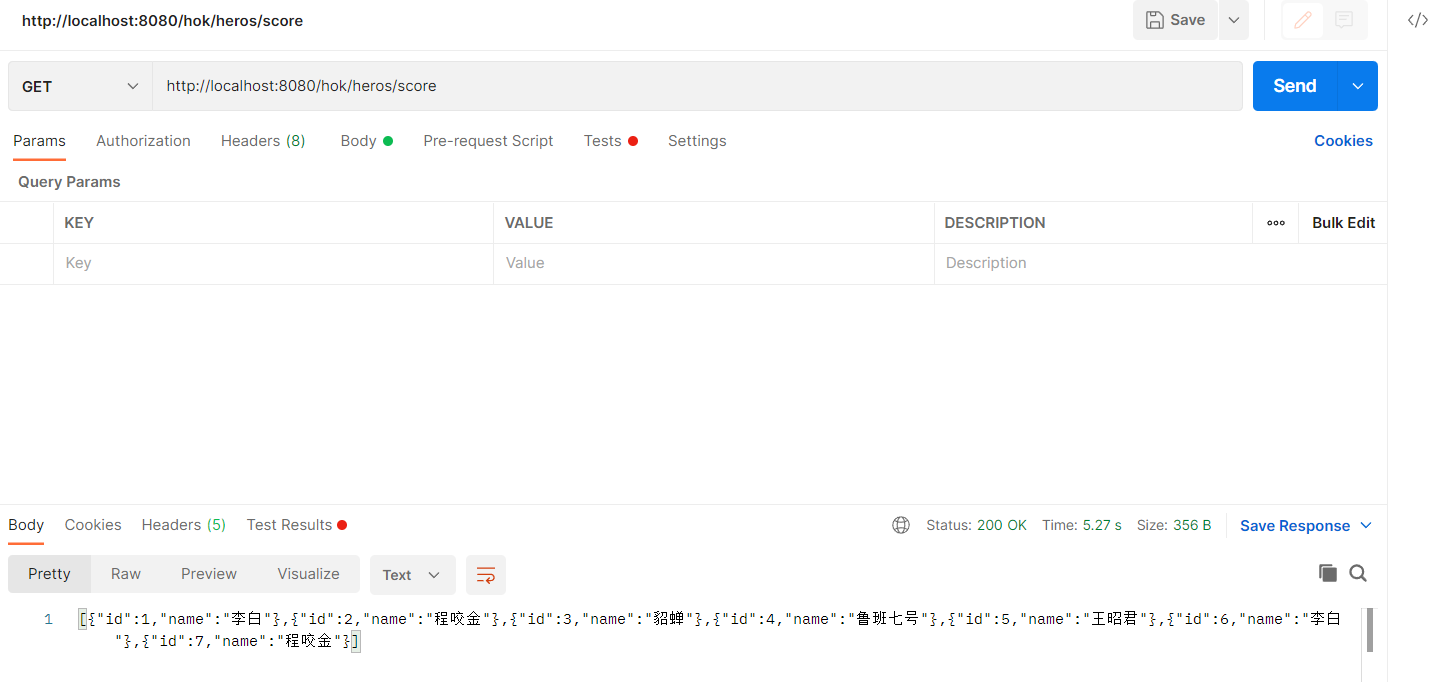

5. Web层 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 package hokdata.web;import com.alibaba.fastjson.JSON;import hokdata.dao.hive.HeroScoreDao;import hokdata.pojo.HeroScore;import org.springframework.beans.factory.annotation.Autowired;import org.springframework.web.bind.annotation.RequestMapping;import org.springframework.web.bind.annotation.RestController;import java.util.List;@RestController @RequestMapping("/heros") public class heroScoreWeb {@Autowired private HeroScoreDao dao;@RequestMapping("score") public String getHeroScore () {String strScore = JSON.toJSONString(scoreList);return strScore;

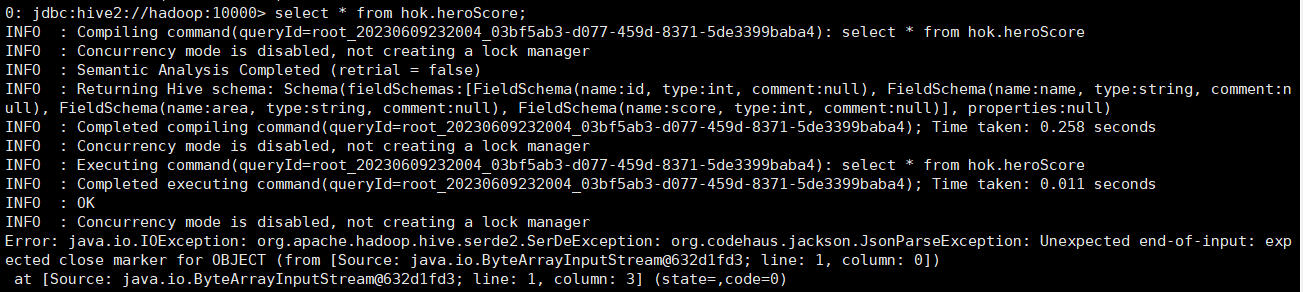

6.结果测试 7.问题1:Hive上传JSON数据报错 建表 1 2 3 4 5 6 7 8 9 10 11 12 CREATE DATABASE hok;DROP TABLE heroScore;CREATE TABLE heroScore (INT ,INT ROW FORMAT SERDE 'org.apache.hadoop.hive.serde2.JsonSerDe' AS TEXTFILE;

官方文档说明:

Starting in Hive 3.0.0, JsonSerDe is added to Hive Serde as “org.apache.hadoop.hive.serde2.JsonSerDe” (HIVE-19211). ———-通过此可以对建表语句进行设置,自动解析json数据

==没找到相应的应用实例,误以为要传.json文件格式;测试了很久发现都报错:JSON数据类型错误==

IDEA里报错 java.io.IOException: org.apache.hadoop.hive.serde2.SerDeException: java.io.IOException: Start token not found where expected

beeline里显示的错误 解决—–传txt文件 ==多次测试还是失败 ==

原因:json数据格式的问题 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 # 错误案例:{ "data" : { "hero" : [ { "id" : 1 , "name" : "李白" , "area" : "江苏" , "score" : 10000 } , { "id" : 2 , "name" : "程咬金" , "area" : "湖南" , "score" : 9000 } , { "id" : 3 , "name" : "貂蝉" , "area" : "广东" , "score" : 9500 } , { "id" : 4 , "name" : "鲁班七号" , "area" : "浙江" , "score" : 9200 } , { "id" : 5 , "name" : "王昭君" , "area" : "江苏" , "score" : 9800 } ] } }

1 2 3 # 可以识别的形式:{ "id" : 6 , "name" : "李白" , "area" : "江苏" , "score" : 10000 } { "id" : 7 , "name" : "程咬金" , "area" : "湖南" , "score" : 9000 }

==hive解析数据时,是一行一行解析,所以一组JSON数据应该在一行里,不能分段==

8.问题2:Hive解析数据的原理 发现: 文件上传到HDFS后,再通过LOAD DATA传到hive解析,原文件就没了,应该是移动操作,但是这样把文件移到hive中,此时受影响行 为0,是在输出的时候才能显示数据,并且再次传入文件,数据是可以正常增加内容显示的。那么—底层是文件数据拼接处理,每次用的时候加载进去;还是解析完文件就自动删除了,再传文件时再传输数据。

总结: 当使用LOAD DATA命令将文件加载到Hive表时,Hive会在内部将文件从HDFS移动 到表所在的存储位置。这意味着原始文件会被移动并在HDFS中的源位置被删除。

Hive并不会直接在加载数据时对原始文件进行拼接操作。当再次传入新的文件时,Hive会将新文件移动到表的存储位置 ,并将新数据与现有数据合并。在查询表时,会看到合并后的数据。

同时,Hive不会 在加载数据后自动删除 原始文件。删除原始文件可以手动在HDFS上操作。

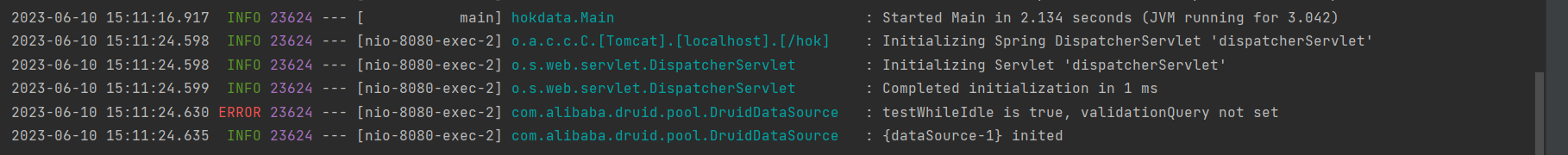

9.问题3:Druid数据源配置中启用了testWhileIdle选项,但没有设置validationQuery属性 ERROR 32060 --- [nio-8080-exec-1] com.alibaba.druid.pool.DruidDataSource : testWhileIdle is true, validationQuery not set

原因: 在Druid数据源配置中启用了testWhileIdle选项,但没有设置validationQuery属性。

testWhileIdle选项用于指定在连接空闲时是否进行连接有效性的测试。如果启用了此选项,连接池将定期检查空闲连接的有效性。

为了进行有效性测试,需要提供一个validationQuery,该查询语句将在连接上执行以验证连接是否有效。

解决: 在Druid数据源配置中设置validationQuery属性,以提供用于连接有效性测试的SQL查询。

1 2 sqlCopy codespring.datasource.druid.testWhileIdle= true = SELECT 1

validationQuery设置为SELECT 1,表示使用简单的SQL查询来测试连接的有效性。

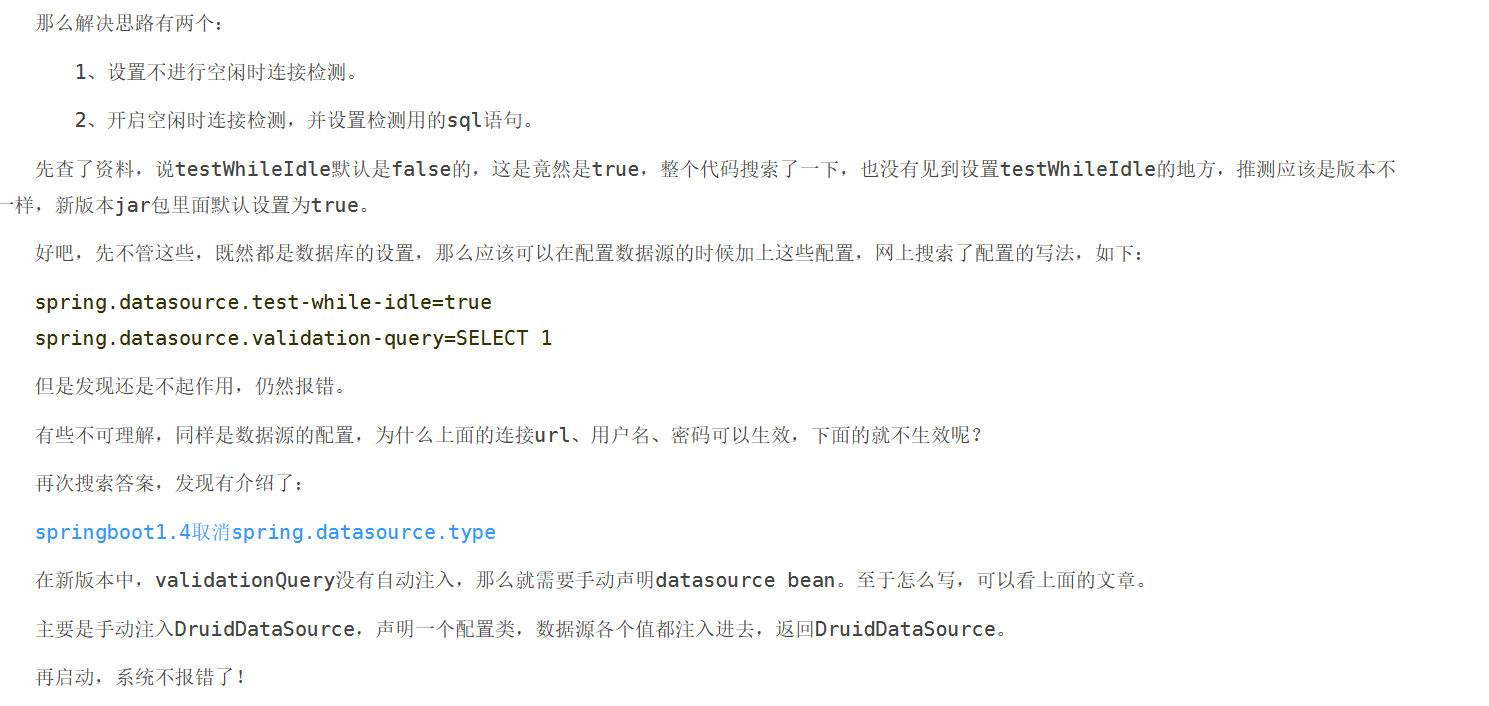

下面是查询到的一个解决方法,但是实际使用失败

不影响结果,暂时没有解决。

已解决:

项目配置文件 application.properties 1 2 3 4 5 datasource.dbs.mysql.validationQuery =SELECT 1 datasource.dbs.hive.validationQuery =SELECT 1

Hive 数据源的配置添加该数据 1 2 3 4 5 6 7 8 9 10 11 12 13 14 @Bean("hiveDataSource") public DataSource getHiveDataSource () {new DruidDataSource ();"driver-class-name" ));"url" ));"username" ));"password" ));"validationQuery" )); return dataSource;

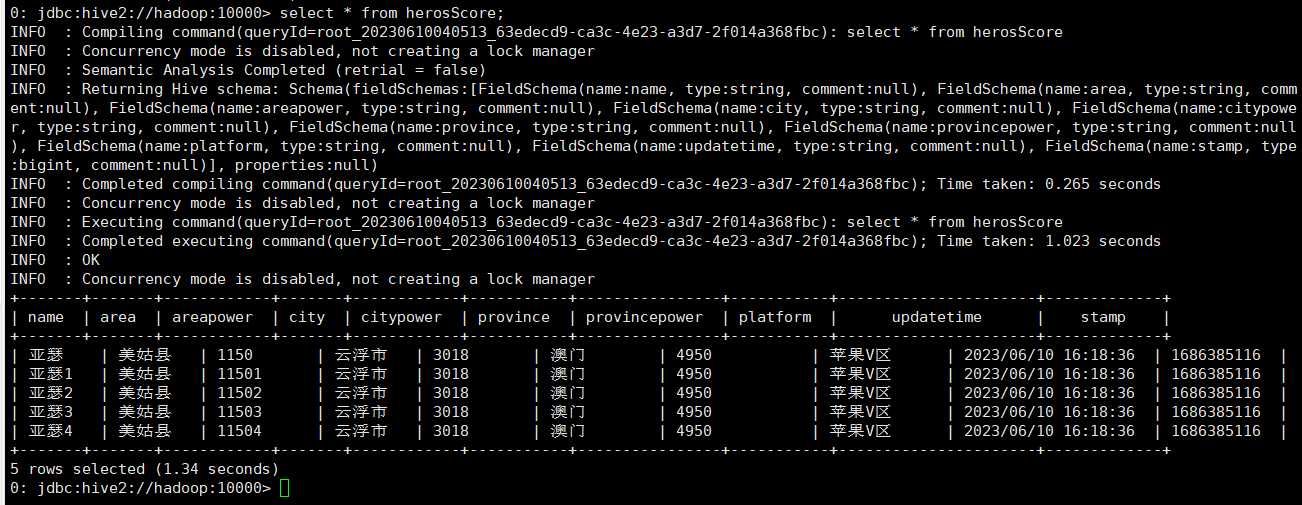

10.问题4:JSON数据传输类型不匹配 JSON数据传输,每个字段都必须有,并且数据类型一一对应 [ 爬取的数据,值都为” “包含,所以都改为String类型 ]

类型不匹配/字段不匹配 都会报错:

Error: java.io.IOException: org.apache.hadoop.hive.serde2.SerDeException: org.codehaus.jackson.JsonParseException: Current token (VALUE_STRING) not numeric, can not use numeric value accessors at [Source: java.io.ByteArrayInputStream@759f0d70; line: 1, column: 74] (state=,code=0)

==Tip:加载数据之前,可以使用 CAST 函数将相应字符串类型的字段转换为 INT 类型;但是用的是hive数据文件导入形式,不能用函数; ==

解决:

修改数据库内容,使类型都为String,字段和JSON相匹配;同时进行数据清理,脏数据重复并且数据量不多,把出现错误的数据使用txt查找-修改功能整理后,导入成功。

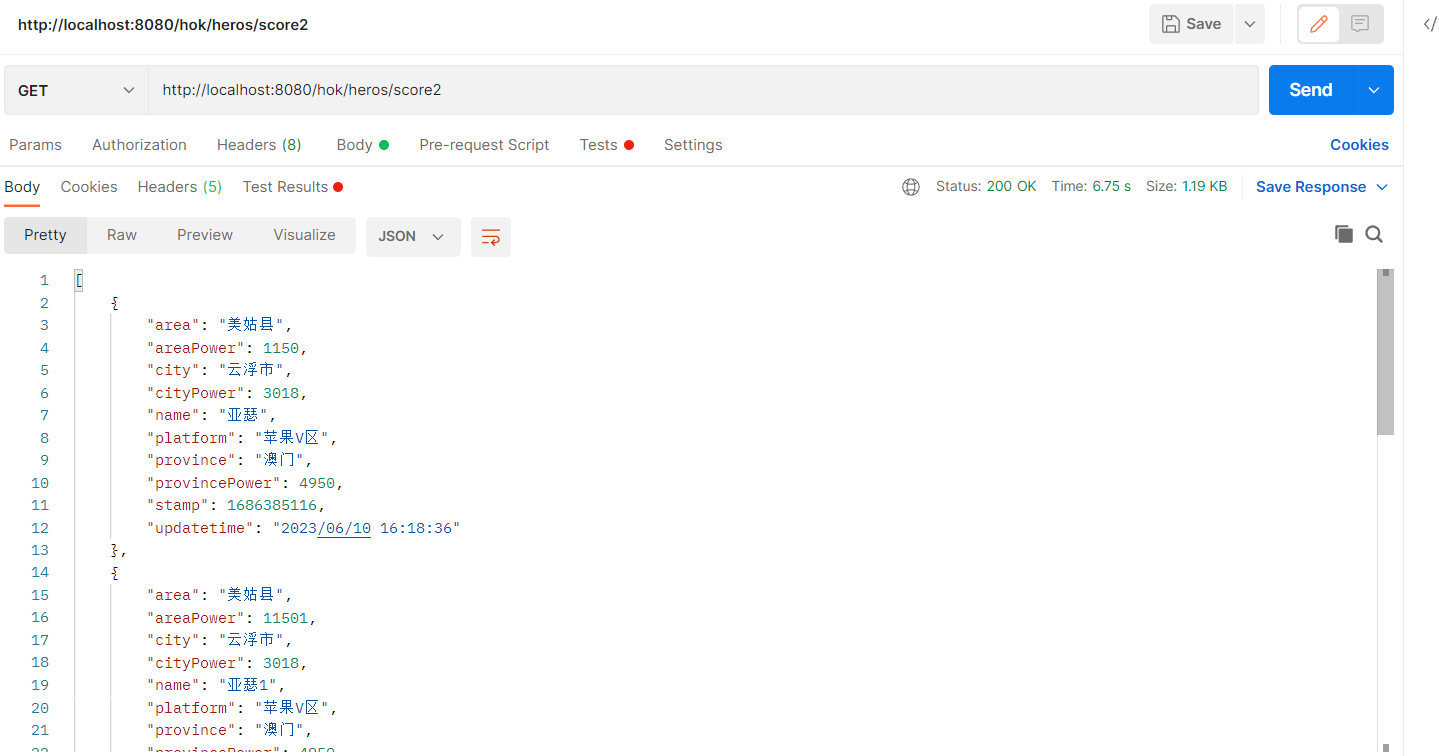

2.最后实体类的时候类型改回相应的数据类型即可,如Integer 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 package hokdata.pojo;import lombok.AllArgsConstructor;import lombok.Data;import lombok.NoArgsConstructor;@Data @AllArgsConstructor @NoArgsConstructor public class HerosScore {private String name;private String alias;private String area;private Integer areaPower;private String city;private Integer cityPower;private String province;private Integer provincePower;private String platform;private long stamp;private String updatetime;

3.结果验证 11.补:hive无字段自增 默认情况下,表的字段不支持自增功能。Hive 是建立在 Hadoop 之上的数据仓库解决方案,主要用于大规模数据的存储和分析,而不是作为一个传统的关系型数据库。

在 Hive 中实现自增功能,可行的有两种方法:

使用外部工具或编程语言:在数据加载到 Hive 表之前,使用外部工具或编程语言生成自增的值,并将其插入到 Hive 表中。

使用序列生成器:Hive 提供了序列生成器的功能,可以用于生成递增的序列值创建一个序列生成器,并在插入数据时使用该序列生成器生成自增的值。但是,序列生成器是全局的,而不是针对每个表的独立自增。因此,如果多个表需要使用自增功能,它们将共享同一个序列生成器。

==以上两种方法都是通过外部手段实现自增功能,而不是 Hive 自身的内置功能。==

补充:传JSON数据给Hive的流程总结 1.编写txt文件

1 2 { "id" : 6 , "name" : "李白" , "area" : "江苏" , "score" : 10000 } { "id" : 7 , "name" : "程咬金" , "area" : "湖南" , "score" : 9000 }

2.xftp传到虚拟机

3.上传到HDFS

hdfs dfs -put /data/data.txt /user/root/

4.LOAD DATA给hive表格(需要设置自动解析)

LOAD DATA INPATH '/user/root/data4.txt' INTO TABLE heroScore;

5. 前端测试用例 1.Ajax请求 导入js文件 1 <script type="text/javascript" src="js/jquery-3.5.1.min.js" ></script>

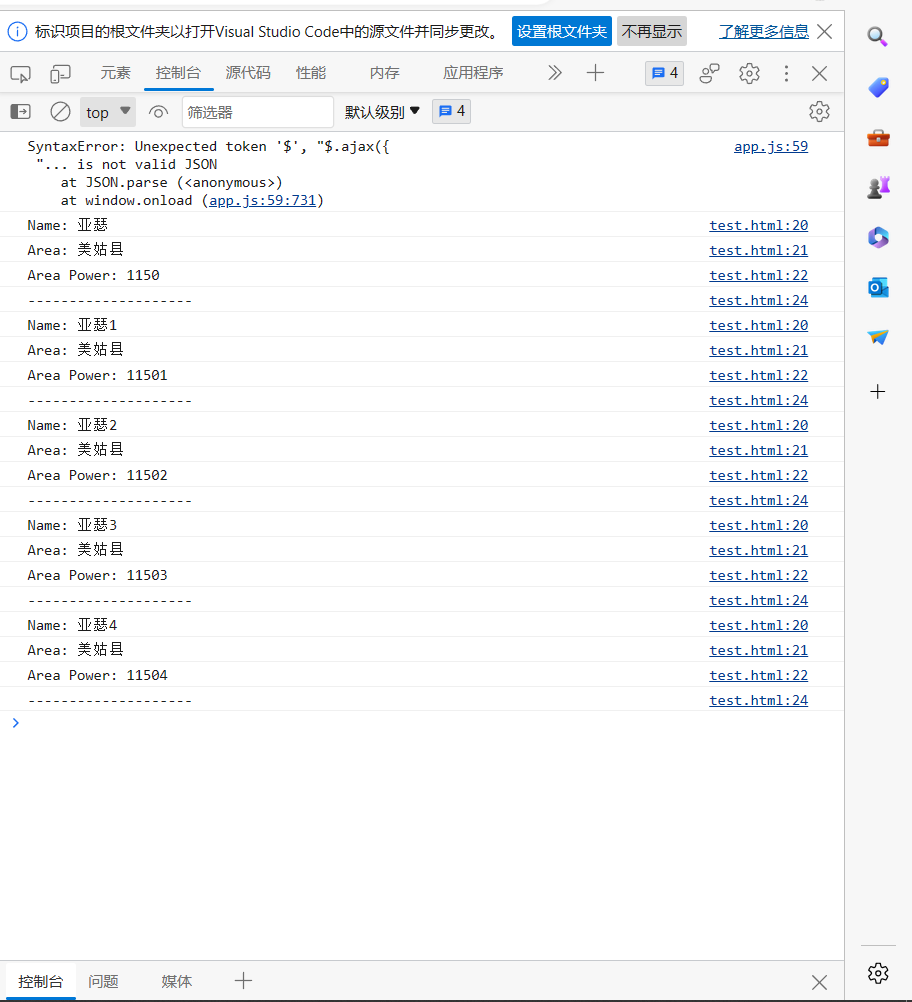

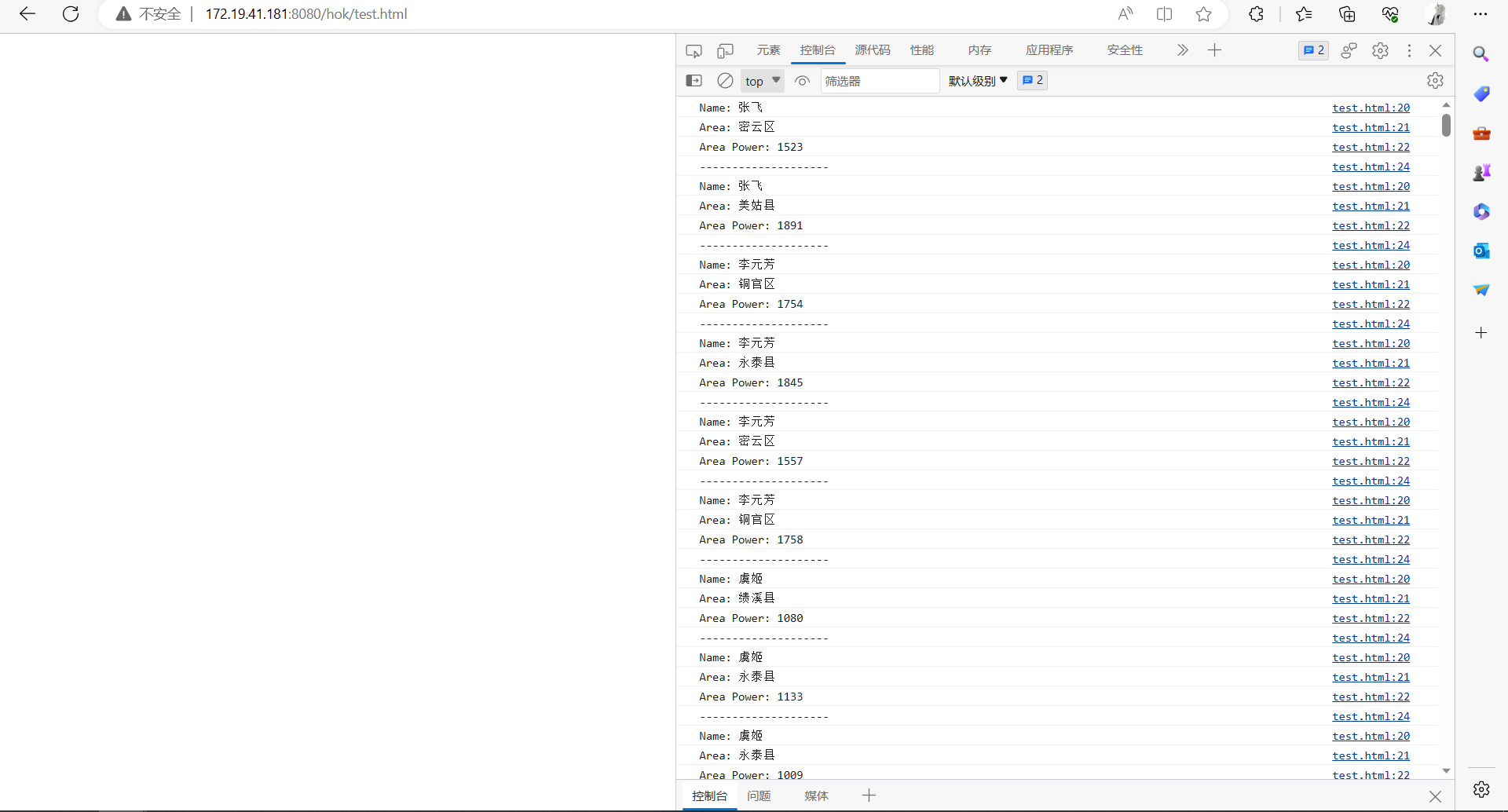

发送GET请求测试 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 $.ajax({'http://192.168.61.21:8080/hok/heros/score2' ,'GET' ,'json' ,if (Array.isArray(response)) {for (var i = 0 ; i < response.length; i++) {var item = response[i];"Name: " + item.name);"Area: " + item.area);"Area Power: " + item.areaPower);"--------------------" );else {"Name: " + response.name);"Area: " + response.area);"Area Power: " + response.areaPower);

2.控制台打印 6.给前端传数据的跨域问题 ==封装后端的数据,直接把结果返回即可,使前端通过Ajax请求直接访问url获取数据==

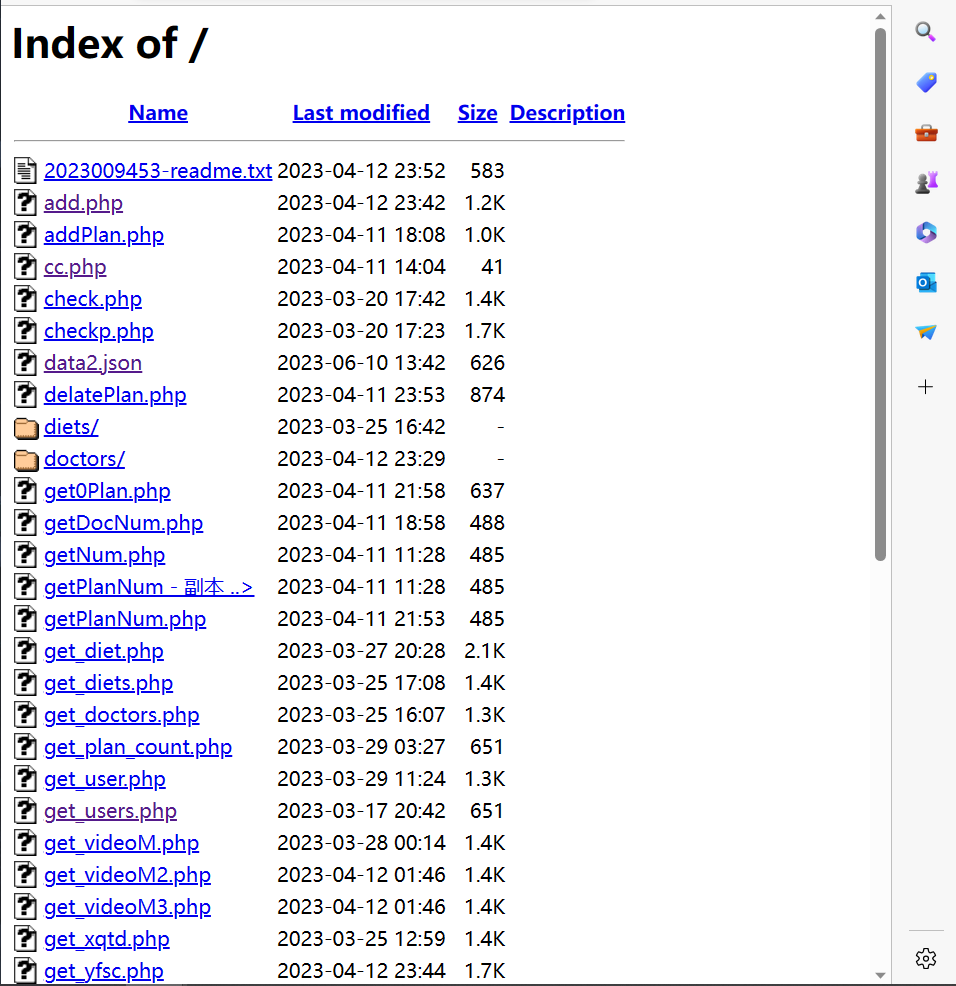

1.思路一,之前配置过Apache服务器,局域网内可以直接访问 将文件存储在这边,然后通过访问服务器网址来获得JSON数据;

2.改进—>使用反向代理,将80端口映射到8080端口,直接访问Tomcat服务器 1.httpd.conf配置文件中加载模块 LoadModule proxy_module modules/mod_proxy.so

LoadModule proxy_http_module modules/mod_proxy_http.so

2.开放配置文件 Include conf/extra/httpd-vhosts.conf

3.httpd-vhosts.conf中添加具体配置 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 <VirtualHost _default_:80 >`80 `"${SRVROOT}/htdocs" `80 >"${SRVROOT}/htdocs" 80

3.Tomcat也是服务器—>直接开放8080端口供外网访问 配置进站规则即可

==即:设置Win10防火墙规则,使得局域网能访问此电脑的Tomcat服务==

至此,可以通过局域网直接访问JSON数据

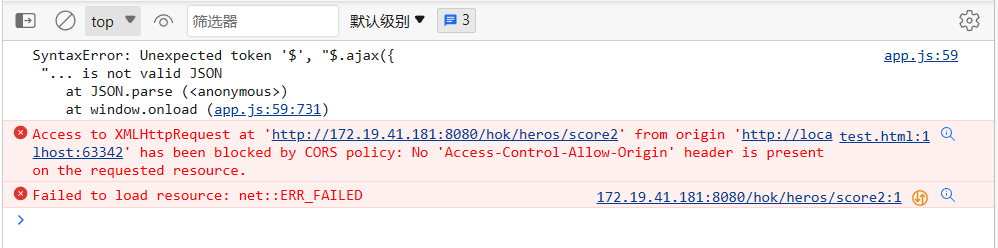

发送AJAS请求时会出现错误 Access to XMLHttpRequest at 'http://172.19.41.181:8080/hok/heros/score2' from origin 'http://localhost:63342' has been blocked by CORS policy: No 'Access-Control-Allow-Origin' header is present on the requested resource.

==即CORS(跨域资源共享)错误。==

4.CORS(跨域资源共享) 这是浏览器的安全策略,用于阻止跨域请求,从一个源发出的请求访问另一个源的资源。

如果是本机,是同一个源下,可以正常访问,如下

TIP:[即如果直接打开HTML,不走服务器,就不属于同一个源,直接打开是通过http://localhost:63342进行跨域请求访问,所以不能获得数据]

非服务器端要想获得数据,有两种解决

代理服务器:在本地开发环境中,设置一个代理服务器来解决跨域问题。代理服务器接收请求,然后转发到目标服务器上,以此绕过浏览器的跨域限制。

将AJAX请求发送到代理服务器的地址,然后代理服务器会将请求转发到http://172.19.12.1:8080/hok/heros/score2上,将响应返回给前端。

启用CORS:如果你访问目标服务器(http://172.19.12.1:8080),就可以在服务器端启用CORS,允许来自不同域的请求访问资源。

5.最终使用—Tomcat开放端口+Config配置文件 解决跨域问题 将整个项目配置为开启跨域

在config包下面新建一个CorsConfig文件:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 package hokdata.conf;import org.springframework.context.annotation.Configuration;import org.springframework.web.servlet.config.annotation.CorsRegistry;import org.springframework.web.servlet.config.annotation.WebMvcConfigurer;@Configuration public class CrosConf implements WebMvcConfigurer {@Override public void addCorsMappings (CorsRegistry registry) {"/**" )"*" )"*" )"*" );

这样就可以通过http://localhost:63342或者其他http://****直接访问了

==即:允许前端应用程序从不同的域名或端口发起请求而不受浏览器的限制==

7.代码简单优化 1.*数据传输时,要什么给什么,而不是SELECT ; 保证数据安全性;

1 @Select("SELECT DISTINCT banRate value, heroName name FROM heroInfos ORDER BY banRate DESC LIMIT 10")

2.跨域请求地址需要设定具体的,不能任何请求都通过

1 .allowedOrigins("http://86645****" , "http://172.13.12.12*" )

3.返回JSON数据字段过多问题,实体类必须和数据库字段一样,所以从存入数据库进行控制

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 package hokdata.pojo;import lombok.AllArgsConstructor;import lombok.Data;import lombok.NoArgsConstructor;@Data @AllArgsConstructor @NoArgsConstructor public class Heros {private int id;private int heroId;private float banRate;private float showRate;private float winRate;private String tRank;private String heroName;private String heroCareer;private String pick;private String ban;

存入数据库时进行控制:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 String insertQuery = "INSERT INTO heroInfos (heroId, banRate, showRate, winRate, tRank, heroName, heroCareer, pick, ban) VALUES (?, ?, ?, ?, ?, ?, ?, ?, ?)" ;try (PreparedStatement statement = connection.prepareStatement(insertQuery)) {for (Map<String, Object> data : dataList) {1 , (int ) data.get("heroId" ));2 , (double ) data.get("banRate" ));3 , (double ) data.get("showRate" ));4 , (double ) data.get("winRate" ));5 , (String) data.get("tRank" ));"heroInfo" );6 , (String) heroInfo.get("heroName" ));7 , (String) heroInfo.get("heroCareer" ));8 , (String) data.get("pick" ));9 , (String) data.get("ban" ));

4.HeroInfo数据表格中数据重复,因为不同id对应不同版本的英雄,所以一个英雄有两条数据,但是搜索到的内容只需要一个 —-> sql语句去重

1 SELECT DISTINCT banRate value , heroName name FROM heroInfos ORDER BY banRate DESC LIMIT 10 ;

8.导入JSON数据进Mysql 因为是导入json文件,同时需要单独处理里面的一个数组元素,只需要JSON里的部分数,所以直接写一个JSONImporter类来操作数据库

heroInfo数组中的字段单独处理Map<String, Object> heroInfo = (Map<String, Object>) data.get("heroInfo");

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 package hokdata;import com.fasterxml.jackson.core.type.TypeReference;import com.fasterxml.jackson.databind.ObjectMapper;import java.io.File;import java.sql.Connection;import java.sql.DriverManager;import java.sql.PreparedStatement;import java.sql.SQLException;import java.util.List;import java.util.Map;public class JSONImporter {public static void main (String[] args) {String fileName = "src/main/java/hokdata/heroInfo.json" ;String url = "jdbc:mysql://localhost:3306/hok" ;String username = "root" ;String password = "123456" ;try (Connection connection = DriverManager.getConnection(url, username, password)) {ObjectMapper objectMapper = new ObjectMapper ();new File (fileName), new TypeReference <List<Map<String, Object>>>() {});String insertQuery = "INSERT INTO heroInfos (heroId, banRate, showRate, winRate, tRank, heroName, heroCareer, pick, ban) VALUES (?, ?, ?, ?, ?, ?, ?, ?, ?)" ;try (PreparedStatement statement = connection.prepareStatement(insertQuery)) {for (Map<String, Object> data : dataList) {1 , (int ) data.get("heroId" ));2 , (double ) data.get("banRate" ));3 , (double ) data.get("showRate" ));4 , (double ) data.get("winRate" ));5 , (String) data.get("tRank" ));"heroInfo" );6 , (String) heroInfo.get("heroName" ));7 , (String) heroInfo.get("heroCareer" ));8 , (String) data.get("pick" ));9 , (String) data.get("ban" ));"导入完成." );catch (SQLException e) {catch (Exception e) {

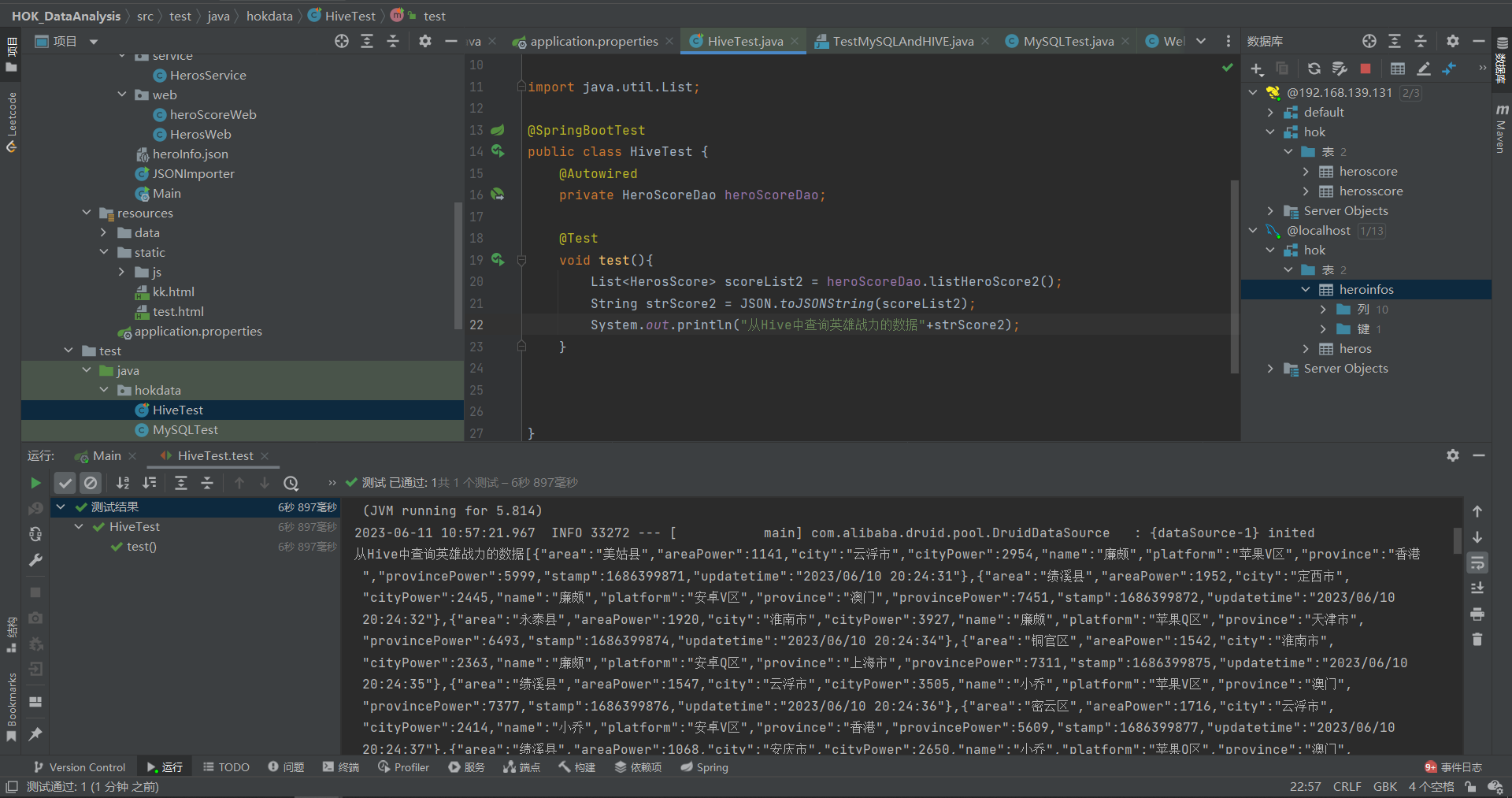

9.测试用例汇总 HiveTest.java 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 package hokdata;import com.alibaba.fastjson.JSON;import hokdata.dao.hive.HeroScoreDao;import hokdata.pojo.HeroScore;import hokdata.pojo.HerosScore;import org.junit.jupiter.api.Test;import org.springframework.beans.factory.annotation.Autowired;import org.springframework.boot.test.context.SpringBootTest;import java.util.List;@SpringBootTest public class HiveTest {@Autowired private HeroScoreDao heroScoreDao;@Test void test1 () {String strScore2 = JSON.toJSONString(scoreList2);"从Hive中查询英雄战力的数据" +strScore2);@Test void test2 () {String strScore = JSON.toJSONString(scoreList1);"从Hive中查询的英雄基础数据--测试连接" +strScore);

Local_WebTest.java 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 package hokdata;import org.springframework.boot.test.context.SpringBootTest;import org.springframework.http.HttpHeaders;import org.springframework.http.HttpMethod;import org.springframework.http.MediaType;import org.springframework.http.ResponseEntity;import org.springframework.web.client.RestTemplate;import java.util.Collections;@SpringBootTest public class Local_WebTest {public static void main (String[] args) {RestTemplate restTemplate = new RestTemplate ();HttpHeaders headers = new HttpHeaders ();"http://localhost:8080/hok/heros/form4" ,null ,String responseBody = response.getBody();

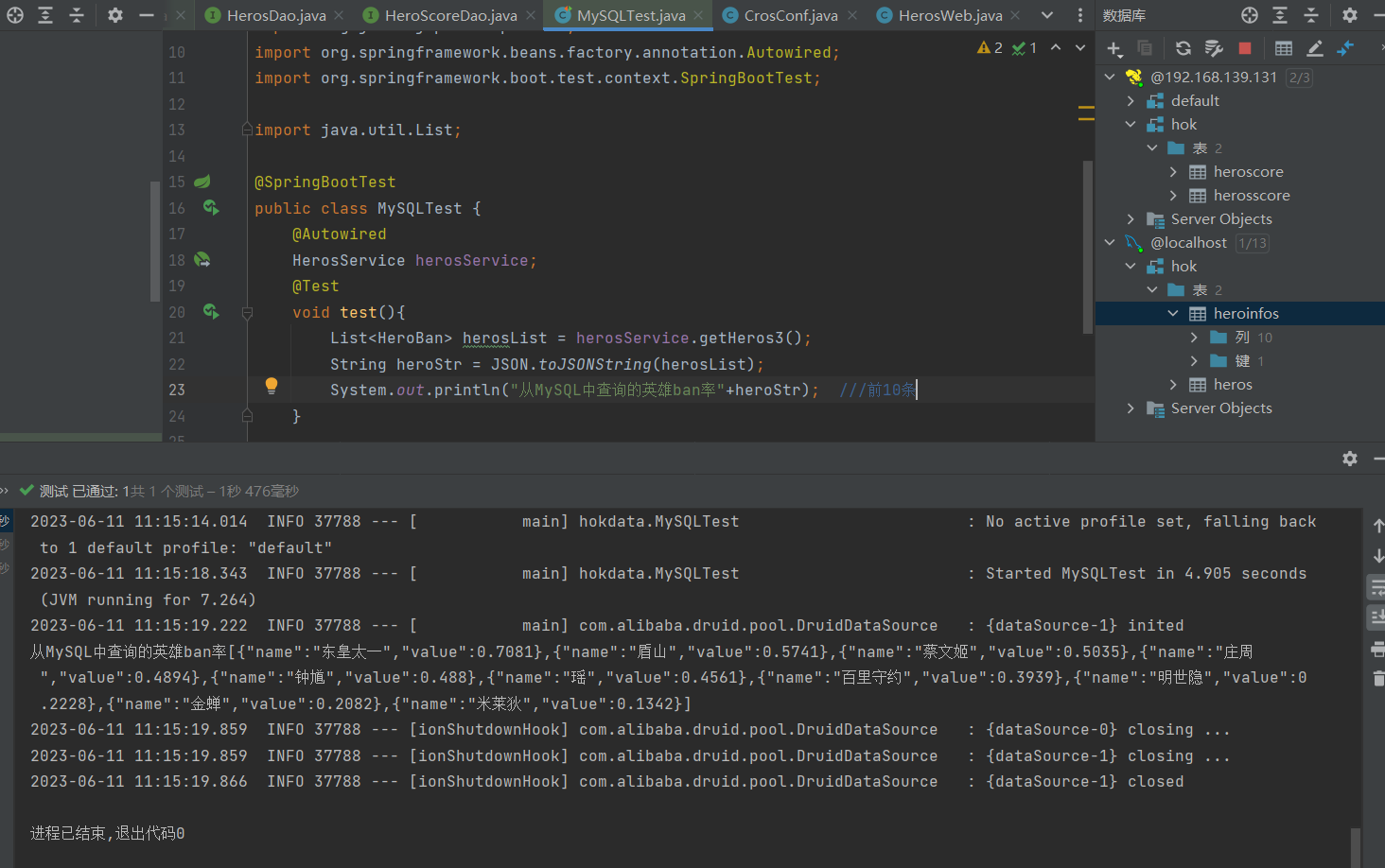

MySQLTest 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 package hokdata;import com.alibaba.fastjson.JSON;import hokdata.dao.hive.HeroScoreDao;import hokdata.dao.mysql.HerosDao;import hokdata.pojo.HeroBan;import hokdata.pojo.Heros;import hokdata.service.HerosService;import org.junit.jupiter.api.Test;import org.springframework.beans.factory.annotation.Autowired;import org.springframework.boot.test.context.SpringBootTest;import org.springframework.web.bind.annotation.RequestMapping;import java.util.List;@SpringBootTest public class MySQLTest {@Autowired @Test void test1 () {String heroStr = JSON.toJSONString(herosList);"从MySQL中查询的英雄基础信息" +heroStr);@Test void test2 () {String heroStr = JSON.toJSONString(herosList);"从MySQL中查询的英雄ban率前10条" +heroStr); @Test void test3 () {String heroStr = JSON.toJSONString(herosList);"从MySQL中查询的英雄热度排行前10条" +heroStr);@Test void test4 () {String heroStr = JSON.toJSONString(herosList);"从MySQL中查询的英雄胜率前十" +heroStr);

yumin_WebTest 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 package hokdata;import org.springframework.boot.test.context.SpringBootTest;import org.springframework.http.HttpHeaders;import org.springframework.http.HttpMethod;import org.springframework.http.MediaType;import org.springframework.http.ResponseEntity;import org.springframework.web.client.RestTemplate;import java.util.Collections;@SpringBootTest public class yumin_WebTest {public static void main (String[] args) {RestTemplate restTemplate = new RestTemplate ();HttpHeaders headers = new HttpHeaders ();"http://192.168.61.21:8080/hok/heros/form4" ,null ,String responseBody = response.getBody();